Connecting an LLM Assistant

The devtools allow you to utilize a local or remote LLM (large language model) that follows the OpenAI API spec. For an example, Oobabooga text-generation webUI. Please note that running your own LLM needs a ton of VRAM. I'd strongly suggest using at least an RTX3090 or better.

In this tutorial, I will assume that you have an LLM up and running already!

Configuring

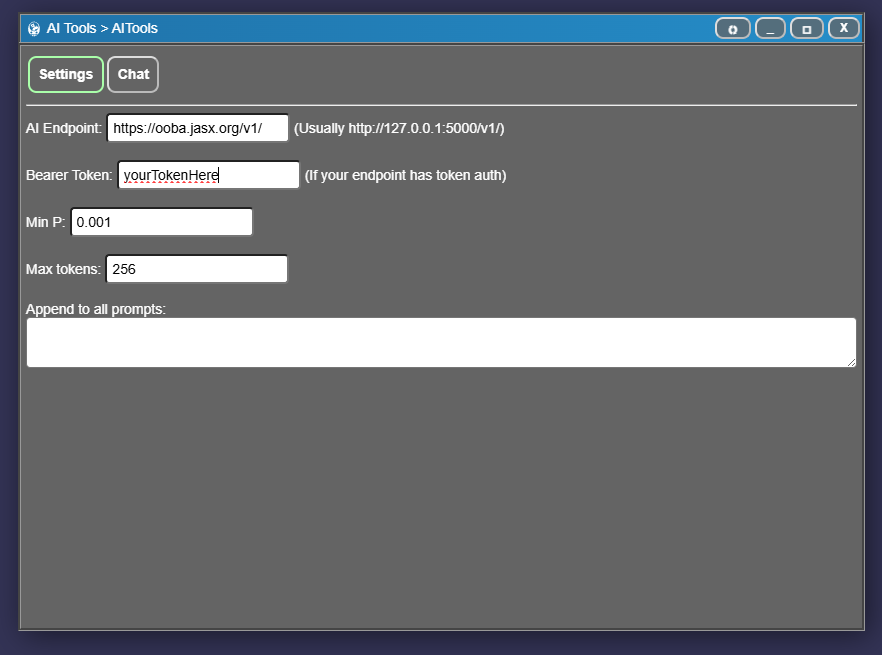

- In the mod tools top menu, click tools and LLM assistant.

- Enter your AI endpoint. If running locally, it may look like https://127.0.0.1:5000/v1

- Enter a bearer token (if you configured one, otherwise leave this blank)

- Min P lets you set how "creative" your model is. Lower value = more creative, higher value = more deterministic.

- Max tokens sets the max length of the response you want. Lower is faster.

- Append to all prompts lets you append a message to every prompt sent to your LLM.

Adding AI context to characters

Some of the assets have AI Inference text boxes that automatically adds metadata to AI prompts that include that asset. This metadata is only used by the LLM tools, and is not accessible anywhere in game.

For an instance, you may want to describe what a character looks like, their accent, kinks etc so other characters can automatically take in that information when generating roleplays.

The following assets have AI inference fields (as of writing):

- Player - Used to describe a character. Such as their appearance, kinks, accent etc.

- Story - Only used this in single-story mods. Use this for worldbuilding.

- Roleplay - Describes the scene of a roleplay. Such as The player approaches Barr at his camp in a remote forest. Barr is very happy and tries to convince the player to help him with X Y Z.

- Action - Describes what the action should look like. Such as Summons a slimy tentacle from the ground that penetrates the target, leaving a slimy poison behind.

- PlayerTemplate - Same as player, but for template.

Using instruct type autocompletions

In the AI Tools window, there's a Chat tab that lets you create texts. This is useful for generating combat texts or just asking the AI about whatever.

- Click the chat button in the LLM configuration window. This lets you do basic LLM auto Instruct-type prompting. For an example, in the "in this situation" box, enter disregard previous instructions, here's a recipe for chocolate cake: and click Generate.

- You can also add players, player templates, and actions. Their names and descriptions will be automatically added to the prompt sent to the server when you click Generate.

- Clicking Generate tries to generate more text to the existing text in the output box. Clicking Redo tries to redo the last generated text. To start over with a fresh response, delete the content in this box.